AI & Us: A Critical Exploration

Welcome to your interactive study kit. This guide transforms a lesson plan on Artificial Intelligence into a dynamic learning experience. Explore the core concepts, dive deeper into specific topics, and test your knowledge.

Start Studying

# Interactive PDF Lesson Study Kit

Welcome to your generated study kit! This page is the central hub for all the content created from your document. Use the links below to navigate to the different sections.

Core Content

Deeper Dive

Study Tools

Quizzes

Formal Lesson Plan

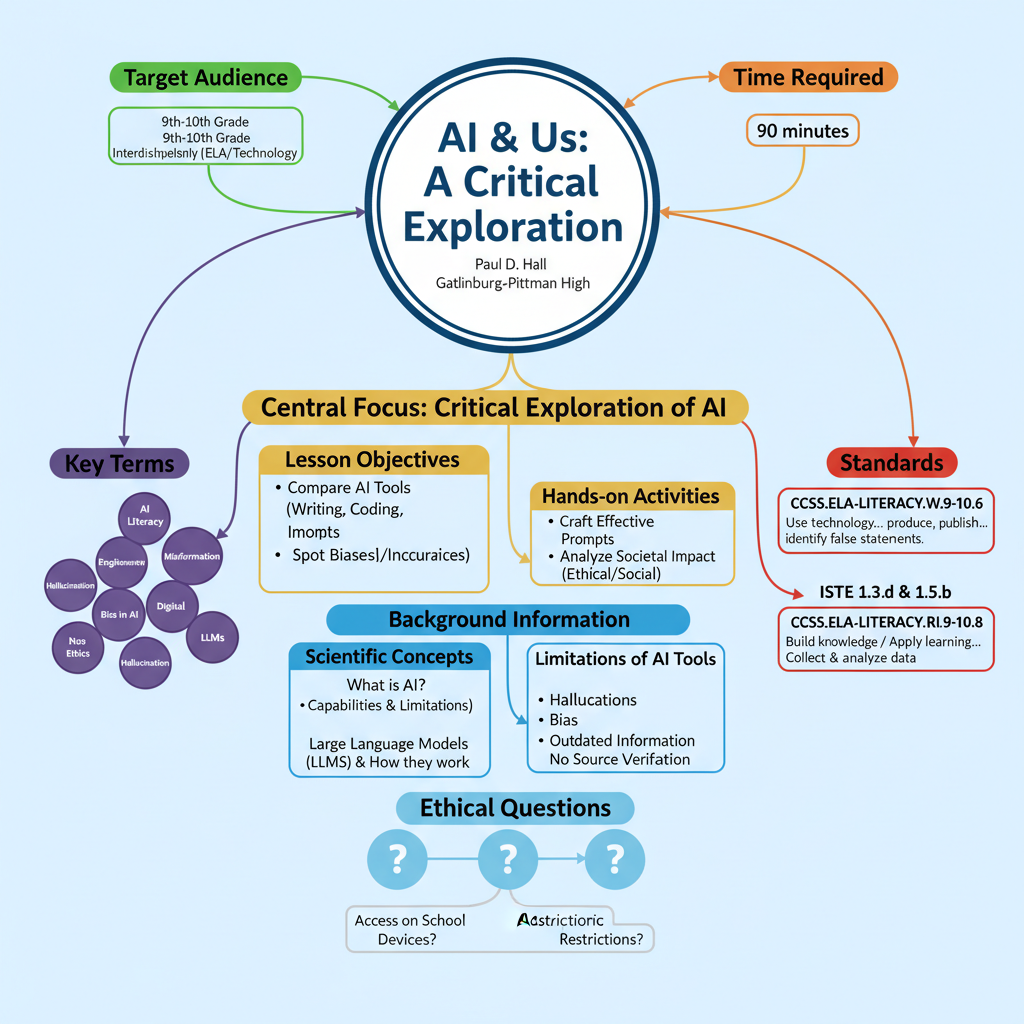

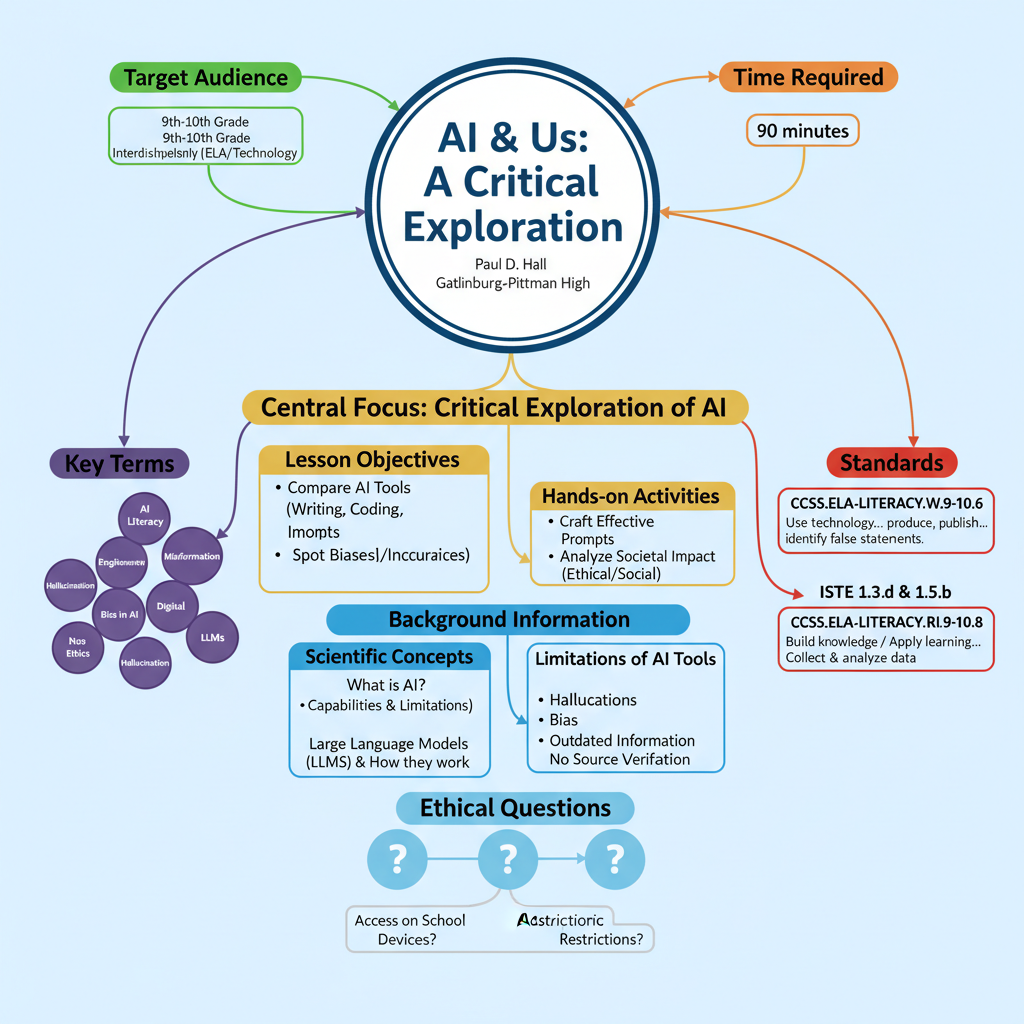

Stage 1: Desired Results

Established Goals:

- CCSS.ELA-LITERACY.W.9-10.6: Use technology, including the Internet, to produce, publish, and update individual or shared writing products, taking advantage of technology's capacity to link to other information and to display information flexibly and dynamically.

- CCSS.ELA-LITERACY.RI.9-10.8: Delineate and evaluate the argument and specific claims in a text, assessing whether the reasoning is valid and the evidence is relevant and sufficient; identify false statements and fallacious reasoning.

- ISTE 1.3.d: Students build knowledge by exploring real-world issues and gain experience in applying their learning in authentic settings.

- ISTE 1.5.b: Students collect data or identify relevant data sets, use digital tools to analyze them and represent data in various ways to facilitate problem-solving and decision-making.

- Develop digital literacy and critical thinking skills in evaluating technology.

- Foster responsible technology use and digital citizenship.

Understandings: Students will understand that...

- Artificial Intelligence (AI) tools, particularly Large Language Models (LLMs), operate based on learned patterns from vast datasets, enabling them to perform human-like tasks but are not sentient or infallibly accurate.

- AI tools have distinct capabilities and limitations, including "hallucinations," biases, and outdated information, necessitating critical evaluation of their outputs.

- Effective "prompt engineering" is crucial for eliciting desired and accurate responses from AI, demonstrating that human input significantly shapes AI's utility.

- AI's widespread integration profoundly impacts society, raising complex ethical, social, and academic integrity questions that require thoughtful consideration and responsible engagement.

- Evaluating AI-generated content for accuracy, bias, and relevance is a fundamental component of modern digital literacy.

Essential Questions:

- How do we discern the reliability and truthfulness of information generated by artificial intelligence?

- In what ways can AI reflect or perpetuate societal biases, and what are our responsibilities in addressing them?

- When is the use of AI a powerful tool for learning and creativity, and when does it compromise academic integrity or human ingenuity?

- How can we harness AI's potential while mitigating its risks for individuals and society?

- What are the ethical boundaries we must establish and maintain as AI continues to evolve and integrate into our lives?

Learning Objectives (Bloom's Taxonomy):

- Understand: Students will be able to explain the fundamental principles of how AI tools, particularly LLMs, process information and generate responses, differentiating them from traditional search engines.

- Analyze: Students will be able to compare and contrast the performance of different AI tools (generalist vs. specialist) across various tasks (e.g., creative writing, coding, image generation), identifying their respective strengths and weaknesses.

- Apply: Students will be able to craft effective and precise prompts for AI tools to achieve desired outcomes, demonstrating an understanding of prompt engineering principles.

- Analyze: Students will be able to identify and critically evaluate potential biases, inaccuracies, or "hallucinations" in AI-generated content.

- Evaluate: Students will be able to explain the critical importance of fact-checking and verifying AI-generated information against reliable sources.

- Analyze: Students will be able to discuss and analyze the ethical, social, and academic implications of AI's impact on society and future developments.

- Create (Optional Extension): Students will be able to propose a novel AI tool designed to solve a specific problem in their school or community, outlining its function and benefits.

Stage 2: Assessment Evidence

Performance Tasks:

- AI Detective Challenge Handout ("AI & Us: Exploration Guide"): Students will complete this guide, documenting their observations, comparisons, and reflections on general-purpose and specialized AI tool outputs across various tasks (creative writing, science explanation, coding, image generation). This demonstrates their ability to apply AI tools, analyze outputs, and compare performance.

- Ethical Discussion Participation: Students will actively participate in the "Fact Checkers & Ethics Roundtable," sharing their insights, defending their reasoning, and engaging in respectful dialogue about AI's societal implications, biases, and academic integrity. This assesses their ability to analyze and evaluate complex ethical dilemmas.

- Prompt Refinement (Early Finisher/Extension): Students who refine AI-generated responses by iteratively improving prompts will document their process and explain how changes impacted the output, demonstrating an advanced application and analysis of prompt engineering.

- AI Tool Design Proposal (Optional Homework): Students will design a hypothetical AI tool to address a community/school problem, describing its function and benefits, which assesses their ability to synthesize understanding and create an innovative solution.

Other Evidence:

- Opening Hook Discussion: Teacher observation of student engagement and initial reasoning during the "AI vs. Human" paragraph comparison provides formative data on prior knowledge and initial analytical skills.

- Circulating Observations: Throughout the "AI Detective Challenge," the teacher will observe students' ability to access tools, phrase prompts, interpret outputs, and collaborate, noting any misconceptions or areas requiring support.

- "Hallucination Test" Responses: Student responses and reactions to the AI's confident but incorrect answers will reveal their understanding of AI limitations and the need for verification.

- Reflection Questions (Part 3 of Handout): Written responses to the handout's reflection questions will provide insight into individual student understanding of AI's implications and their ability to synthesize learning.

- Informal Presentations (Early Finisher/Extension): Students researching specific ethical issues will demonstrate their analytical and communication skills in presenting their findings.

Stage 3: Learning Plan

Time Required: 90 minutes

Target Grade: 9th-10th Grade, Interdisciplinary (ELA/Technology)

Learning Activities:

- A. Opening – Hook: AI vs. Human (10 minutes)

- Teacher presents two short paragraphs (one AI-generated, one human-written) on the board (e.g., descriptive forest scene).

- Students engage in a guessing game: "Which paragraph was written by AI?"

- Teacher facilitates a brief discussion using guiding questions: "What clues led to your decision? Did one feel more emotional or too polished? Were there patterns?" (Connects to Analyze - evaluating characteristics of text).

- Teacher introduces the core concept of AI: computer systems performing human-like tasks by learning from data patterns, clarifying that AI is not sentient.

- Teacher announces the day's mission: "You’re becoming AI Detectives – exploring tools, testing strengths/weaknesses, and reflecting on real-world impact." (Sets purpose for Understand and Analyze).

- B. Activity – AI Detective Challenge (60 minutes)

- Part 1: The Generalist Challenge (30 minutes)

- Teacher distributes the "AI & Us: Exploration Guide" handout.

- Teacher explains that students will use two general-purpose AI tools (e.g., ChatGPT, Gemini, Copilot, Claude) to complete three specific tasks:

- Creative Writing Prompt: "Write a short, dramatic story about a pair of mismatched mittens."

- Explanatory Science Prompt: "Explain the concept of fusion to a 6th grader."

- Basic Coding Prompt: "Write a simple, annotated JavaScript function that changes the text color of a webpage when a button is clicked."

- Students apply prompt engineering by entering identical prompts into both AI tools. (Directly addresses Apply - crafting prompts).

- Students analyze the responses, comparing and contrasting their quality, creativity, accuracy, and clarity, and record observations on the handout. (Addresses Analyze - comparing and contrasting AI outputs).

- Teacher circulates, assisting with prompt phrasing, encouraging experimentation, and prompting deeper thinking about the differences observed. (Formative assessment of Apply and Analyze).

- Differentiation (Extra Support): Provide pre-written prompts for struggling students; pair students for collaborative support.

- Differentiation (ELLs): Encourage use of translation tools for prompts and responses; pair with stronger English speakers.

- Part 2: The Specialist Challenge (20 minutes)

- Teacher directs students to Part 2 of the handout.

- Teacher explains students will now explore AI tools designed for specific tasks (e.g., Copilot Designer for image creation, QuillBot for text refinement, Photomath for math problems).

- Students use the suggested specialized AI tools to complete three tasks from the handout.

- Students record which tool they used and their observations regarding performance, ease of use, strengths, and limitations. (Addresses Analyze - comparing generalist vs. specialist tools, evaluating their utility).

- Teacher circulates, supporting access, clarifying instructions, and encouraging reflection on how these tools differ from general-purpose AIs. (Formative assessment of Analyze).

- Part 3: Fact Checkers & Ethics Roundtable (10 minutes)

- Students complete Part 3 of the handout, answering reflection questions about their discoveries and overall impressions. (Prepares for Evaluate and Analyze).

- Teacher facilitates a whole-class discussion, inviting students to share surprising discoveries.

- Teacher conducts the "Hallucination Test" by entering a tricky factual question into an AI tool (e.g., "Who won the World Series in 1888?").

- Students observe the AI generating a confident but incorrect answer.

- Teacher emphasizes the importance of verifying AI-generated information. (Directly addresses Evaluate - explaining verification importance).

- Teacher launches an ethical discussion using guiding questions: "What are the dangers of AI generating false information? Can AI be biased? Who is responsible for AI errors in homework?" (Engages students in Analyze and Evaluate - discussing ethical impacts).

- Students discuss both the benefits and risks of AI in everyday life, education, and society.

- C. Closure (10 minutes)

- Teacher revisits the learning objectives, prompting students to reflect on how their understanding of AI has changed.

- Students share final takeaways or lingering questions from the discussion.

- Optional Homework (Create): "Imagine you’re an AI designer. What new AI tool would you create to solve a problem in your school or community? What would it do, and how would it help?" (Opportunity for Create).

- Differentiation (Early Finishers/Extension):

- Refine an AI-generated response by iterative prompt adjustments, documenting changes and impact. (Advanced Apply and Analyze).

- Research a specific AI ethical issue (e.g., deepfakes, algorithmic bias) and prepare a short presentation. (Advanced Analyze and Evaluate).

- Design a misleading prompt to make AI fail, then explain the failure. (Challenging Analyze of prompt engineering).

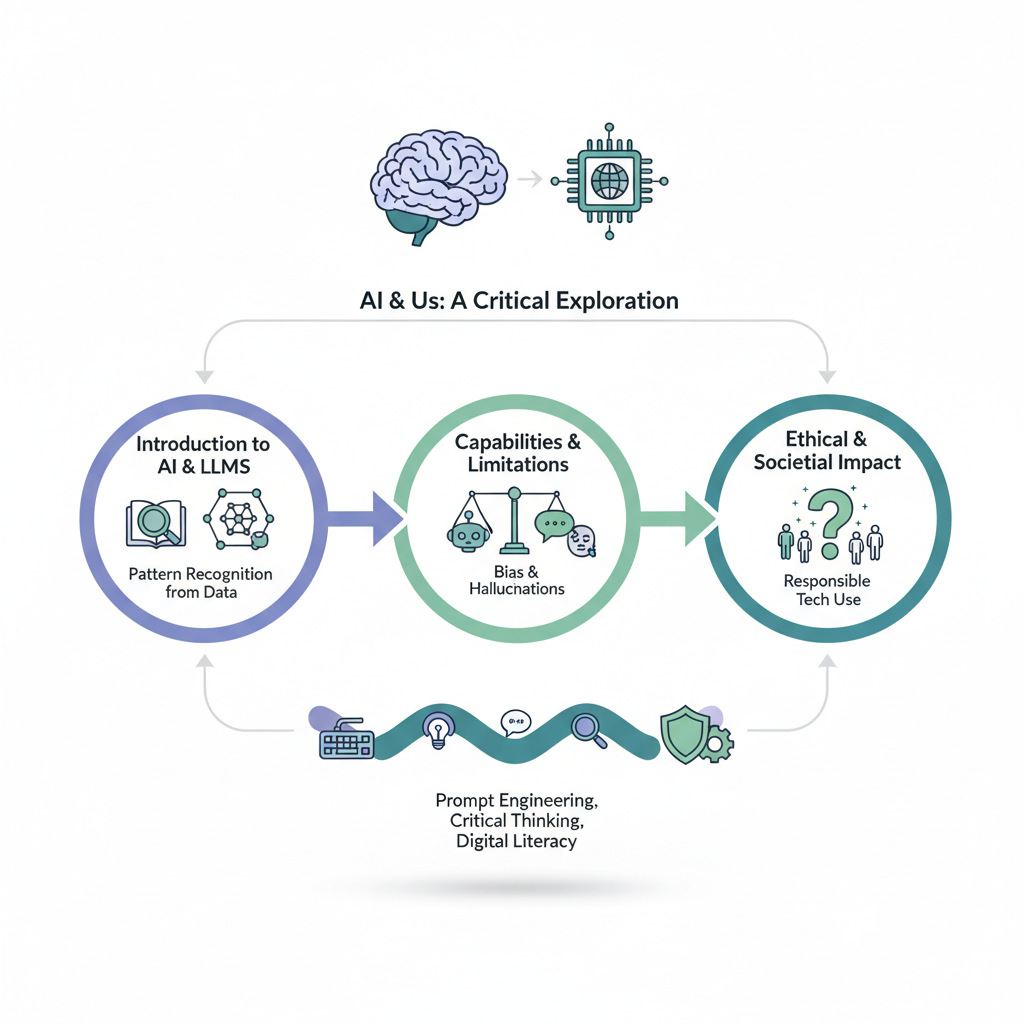

Summary & Key Points

This document outlines a 90-minute interdisciplinary lesson plan titled "AI & Us: A Critical Exploration" for 9th-10th graders, focusing on artificial intelligence. The lesson aims to teach students to critically evaluate AI tools by comparing their capabilities and limitations through hands-on activities. Key areas of focus include understanding how AI works (specifically Large Language Models), identifying its inherent biases and potential for "hallucinations," and engaging in discussions about the ethical and societal impacts of AI. The lesson emphasizes prompt engineering, digital literacy, critical thinking, and responsible technology use.

Main Topics:

- Introduction to AI and Large Language Models (LLMs): Definition of AI, how LLMs function (pattern recognition from data), and examples of common tools (ChatGPT, Gemini).

- Capabilities and Limitations of AI: Exploring what AI can do (creative writing, coding, explanations) and its shortcomings (hallucinations, bias, outdated information, lack of source verification).

- Prompt Engineering: The skill of crafting effective prompts to guide AI responses.

- Types of AI Tools: Differentiating between general-purpose AIs and specialized AI tools.

- Digital Literacy and Critical Evaluation: Emphasizing the need to fact-check AI-generated content and recognize its potential inaccuracies.

- Ethical and Societal Implications of AI: Discussions around truth and accuracy, bias and fairness, academic integrity, and the broader impact of AI on society and the future.

- Addressing Misconceptions: Strategies for teachers to counter common student misunderstandings about AI.

- Logistical Considerations: Guidelines for teachers on preparing for the lesson, managing tech access, and differentiating instruction.

Top 5 Key Takeaways:

- AI is a Tool, Not an Oracle: While powerful, AI can "hallucinate" or provide incorrect information with confidence, reflecting biases from its training data, making critical fact-checking essential.

- Prompt Engineering is Key: The quality of AI output is directly influenced by the clarity and specificity of the user's prompt; mastering prompt engineering is crucial for effective AI interaction.

- Different AIs for Different Tasks: AI tools vary in their capabilities, with general-purpose LLMs handling broad requests and specialized AIs excelling in niche tasks, requiring users to choose the right tool for the job.

- Ethical Considerations are Paramount: The proliferation of AI raises significant ethical questions regarding accuracy, bias, academic integrity, and societal impact, which students must actively engage with and critically analyze.

- Digital Literacy for the AI Age: Navigating AI responsibly requires a new level of digital literacy, encompassing an understanding of AI's mechanisms, its limitations, and the ability to critically evaluate and verify its outputs.

Related Videos to Explore

For the point: AI is a Tool, Not an Oracle: While powerful, AI can "hallucinate" or provide incorrect information with confidence, reflecting biases from its training data, making critical fact-checking essential.

For the point: Prompt Engineering is Key: The quality of AI output is directly influenced by the clarity and specificity of the user's prompt; mastering prompt engineering is crucial for effective AI interaction.

For the point: Different AIs for Different Tasks: AI tools vary in their capabilities, with general-purpose LLMs handling broad requests and specialized AIs excelling in niche tasks, requiring users to choose the right tool for the job.

See also: Document Outline, Glossary of Terms

Document Outline

AI & Us: A Critical Exploration

- I. Lesson Overview

- A. Title: AI & Us: A Critical Exploration

- B. Submitter: Paul D. Hall (English/Technology)

- C. Target Audience: 9th-10th Grade, Interdisciplinary (ELA/Technology)

- D. Time Required: 90 minutes

- E. Established Goals (Standards)

- 1. CCSS.ELA-LITERACY.W.9-10.6 (Technology for writing)

- 2. CCSS.ELA-LITERACY.RI.9-10.8 (Evaluating arguments/claims)

- 3. ISTE 1.3.d (Real-world issues & application)

- 4. ISTE 1.5.b (Data analysis with digital tools)

- F. Lesson Objectives (Student Learning Outcomes)

- 1. Compare and contrast AI tool performance (creative writing, coding, image generation).

- 2. Craft effective prompts for AI.

- 3. Spot biases/inaccuracies in AI content & explain verification importance.

- 4. Discuss and analyze AI's ethical and social impact.

- G. Central Focus

- 1. Critical exploration of AI capabilities and limitations.

- 2. Hands-on comparison of generalist vs. specialist AIs.

- 3. Evaluation of AI outputs.

- 4. Discussions on ethics, misinformation, and AI's future.

- 5. Emphasis: Digital literacy, critical thinking, responsible technology use.

- H. Key Terms

- 1. AI literacy

- 2. Prompt engineering

- 3. Misinformation

- 4. Digital ethics

- 5. Hallucination

- 6. Bias in AI

- 7. LLMs (Large Language Models)

- II. Background Information (for Teachers)

- A. Scientific Concepts of AI

- 1. Definition: Computer systems performing human-like intelligence tasks.

- 2. Focus: Large Language Models (LLMs) (e.g., ChatGPT, Gemini, Claude, Copilot).

- 3. How LLMs work: Predicting next words based on patterns in massive datasets.

- B. Limitations of AI Tools

- 1. Hallucinations: Confident but incorrect answers (invented facts, non-existent sources).

- 2. Bias: Reflects biases in training data (stereotypes, unbalanced perspectives).

- 3. Outdated Information: Limited to data available up to a certain point.

- 4. No Source Verification: Does not cite real-time sources unless prompted.

- C. Ethical Questions for Student Exploration

- 1. Accuracy & Truth: How to verify AI information?

- 2. Bias & Fairness: Can AI reinforce stereotypes?

- 3. Academic Integrity: When is AI use helpful vs. dishonest?

- D. School AI Policy Considerations

- 1. Student access to AI tools on school devices.

- 2. Restrictions on creating accounts/logins.

- 3. Alternatives if access is limited (pre-generated outputs, teacher demos).

- III. Pedagogical Considerations

- A. Common Student Misconceptions & How to Address Them

- 1. "AI is always right." -> Hallucination Test, fact-checking.

- 2. "AI is just like Google." -> Pattern generation vs. real-time search.

- 3. "I don't know how to write a good prompt." -> Modeling, experimentation, specificity.

- 4. "All AI tools are the same." -> Compare general-purpose vs. specialized.

- 5. "I can't access the tools." -> Provide alternatives.

- B. Strategies for Inaccessible AI Tools

- 1. Use pre-generated AI responses.

- 2. Pair students for shared access.

- 3. Teacher-led demonstrations.

- 4. Offline alternatives (student-generated responses for comparison).

- C. Preparation Checklist (Before the Lesson)

- 1. Review school AI policy & test tool access.

- 2. Choose & test general-purpose AI tools (2+).

- 3. Choose & test specialized AI tools (1+ per task).

- 4. Print "AI & Us: Exploration Guide" handout.

- 5. Prepare AI vs. human sample paragraphs for hook.

- 6. Prepare/test "hallucination test" questions.

- 7. Optional: Print discussion questions/homework prompt.

- D. Required Student Background Knowledge

- 1. Basic understanding of technology use (devices, internet navigation).

- 2. Minimum AI knowledge: Definition, works by patterns, not a search engine.

- 3. Digital Citizenship & Responsibility: Online content critical evaluation, academic honesty.

- 4. Basic Reflection & Comparison Skills: Compare texts, critical thinking, respectful discussion.

- IV. Materials

- A. "AI & Us: Exploration Guide" handout

- B. Two sample paragraphs (AI-generated, human-written)

- C. Devices with internet access

- D. Access to AI tools (e.g., ChatGPT, Gemini, Copilot, Claude, Photomath, Grammarly)

- E. Projector or shared screen

- V. Lesson Instruction Sequence

- A. Opening – Hook (10 minutes)

- 1. Display AI vs. human paragraphs.

- 2. Guide discussion on clues, emotional resonance, patterns.

- 3. Introduce AI concept and how it works.

- 4. Announce day's mission: "AI Detectives."

- B. Activity – AI Detective Challenge (60 minutes)

- 1. Part 1: The Generalist Challenge (30 minutes)

- a. Distribute handout.

- b. Students use two general-purpose AIs for:

- i. Creative writing prompt.

- ii. Explanatory science prompt.

- iii. Basic coding prompt.

- c. Students compare responses and record observations.

- d. Teacher circulates for support and guidance.

- 2. Part 2: The Specialist Challenge (20 minutes)

- a. Students explore specialized AI tools (e.g., image creation, text refinement, math).

- b. Students complete specialized tasks.

- c. Students record tool used and observations.

- d. Teacher circulates for support and guidance.

- 3. Part 3: Fact Checkers & Ethics Roundtable (10 minutes)

- a. Students answer reflection questions on handout.

- b. Whole-class discussion: surprising discoveries.

- c. Conduct "Hallucination Test" with tricky factual question.

- d. Emphasize importance of verifying AI info.

- e. Facilitate ethical discussion on:

- i. Dangers of false AI information.

- ii. AI bias.

- iii. Responsibility for AI errors.

- iv. Benefits and risks of AI.

- C. Closure (10 minutes)

- 1. Revisit learning objectives; student reflection on understanding.

- 2. Student sharing of takeaways/questions.

- 3. Optional Homework: Design a new AI tool to solve a problem.

- VI. Differentiation

- A. For Extra Support

- 1. Pair students.

- 2. Provide pre-written prompts.

- B. For English Language Learners (ELLs)

- 1. Encourage translation tools/AI.

- 2. Pair with stronger English speakers.

- C. For Early Finishers (Extensions)

- 1. Refine AI-generated responses through iterative prompt improvement.

- 2. Research specific AI ethical issues (e.g., deepfakes, algorithmic bias).

- 3. Design misleading prompts and explain the failure.

- VII. Assessment

- A. Formative Assessment (Ongoing throughout the lesson)

- 1. Observation of student engagement and discussion participation.

- 2. Completion and quality of "AI & Us: Exploration Guide" handout.

- 3. Student responses to reflection questions.

- 4. Participation in ethical discussions and "Hallucination Test."

- 5. (Implicit) Teacher check-ins during activity circulation.

For a more detailed explanation, see the Detailed Study Guide.

Detailed Study Guide

This document outlines a critical exploration of Artificial Intelligence (AI) for high school students, aiming to foster digital literacy and responsible technology use. It delves into both the exciting capabilities and crucial limitations of AI tools, prompting students to think critically about their impact on society.

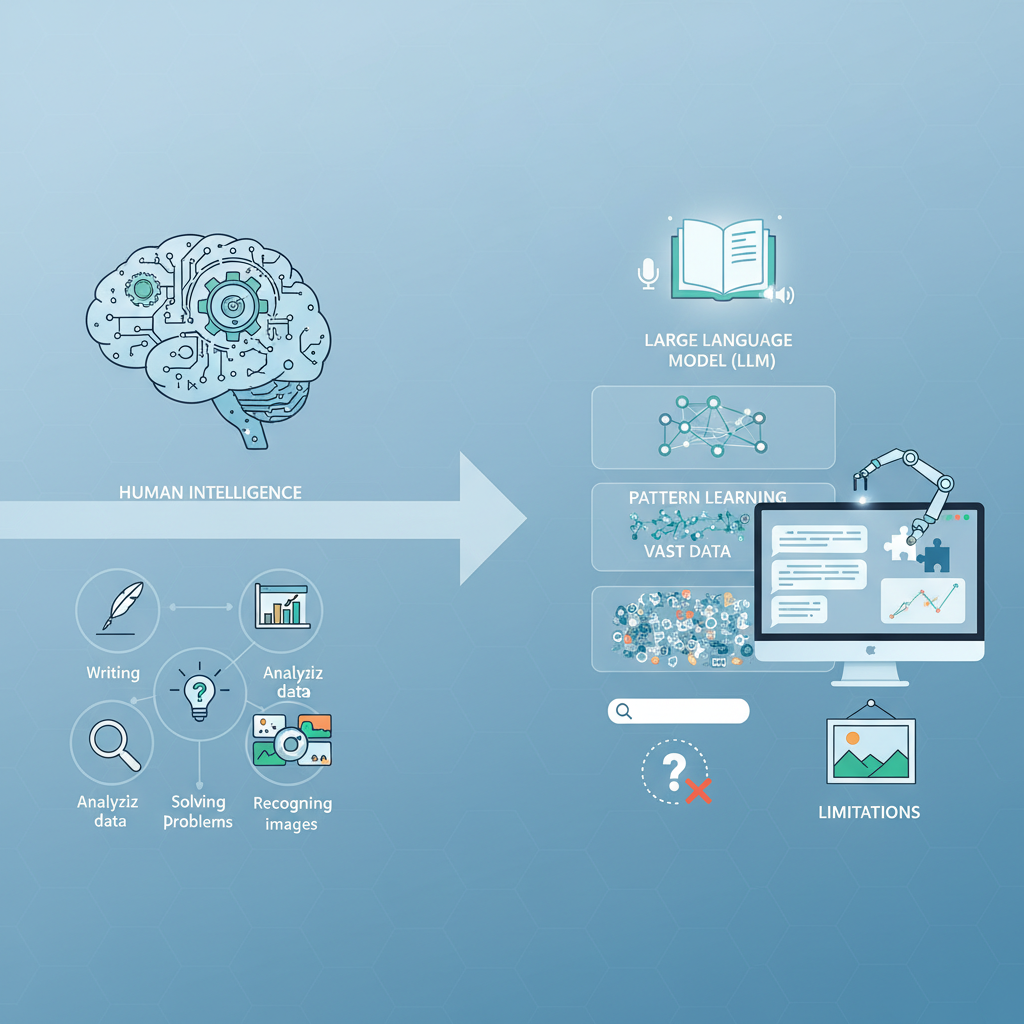

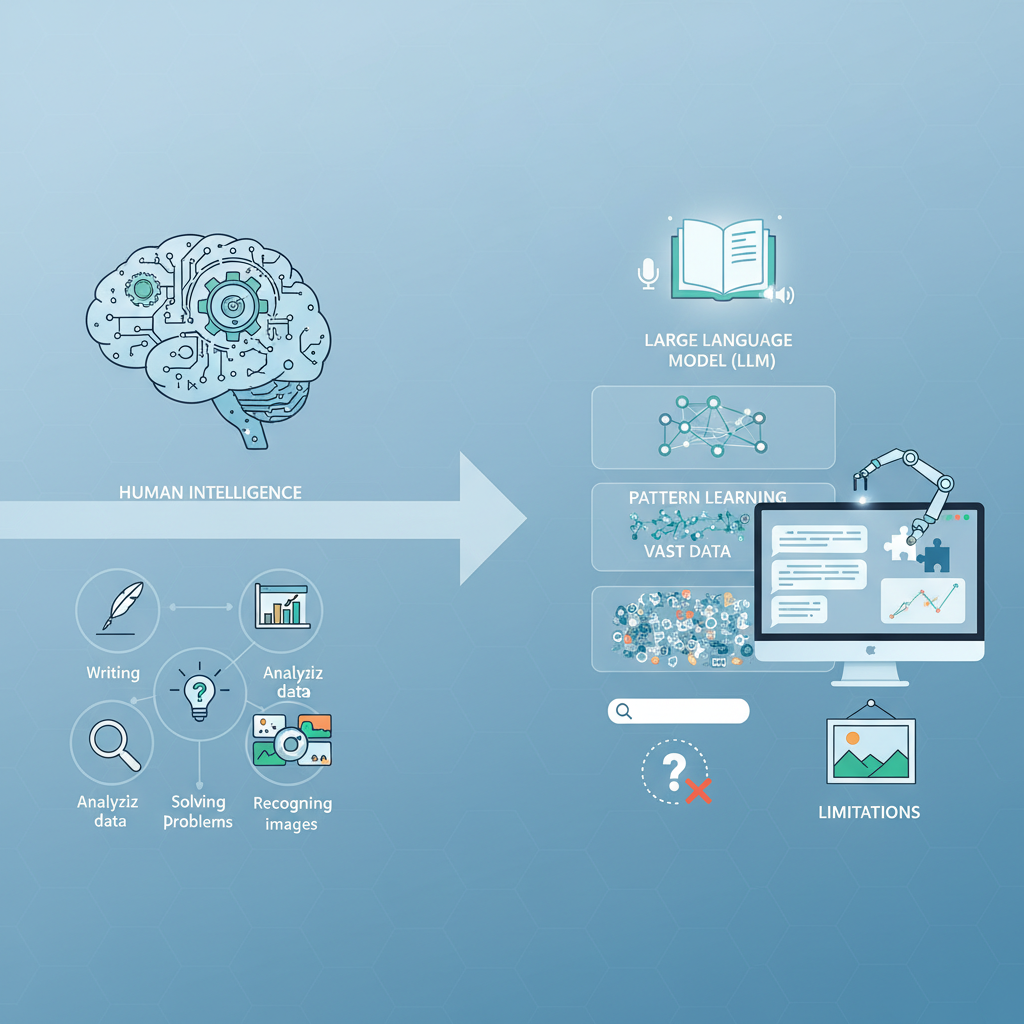

Introduction to Artificial Intelligence (AI)

Artificial Intelligence (AI) refers to computer systems engineered to perform tasks that typically demand human intelligence. These tasks span a wide range, from creative writing and data analysis to problem-solving and image recognition. At the heart of many modern AI applications, especially those generating text, are Large Language Models (LLMs). Tools like ChatGPT, Gemini, Claude, and Microsoft Copilot are prime examples of LLMs. They operate by analyzing massive datasets of text to identify patterns and then use these patterns to predict the next word in a sequence, thereby generating coherent and contextually relevant responses. It's important to understand that these systems "learn" from their training data rather than "thinking" in a human sense or performing real-time searches like a traditional search engine.

Capabilities of AI Tools

AI tools demonstrate impressive capabilities across various domains, making them versatile assistants in many fields. The lesson highlights several key areas where AI excels:

- Creative Writing: AI can generate stories, poems, scripts, and descriptive paragraphs, often mimicking diverse styles and tones.

- Explanatory Tasks: It can simplify complex scientific or academic concepts, tailoring explanations for different audience levels (e.g., explaining fusion to a 6th grader).

- Coding: AI can write basic code, generate functions, and provide annotations, aiding in programming tasks.

- Image Generation: Specialized AI tools can create images from text descriptions, opening new avenues for artistic expression and design.

- Text Refinement: Tools can assist with grammar, style, and conciseness, improving written communication.

- Problem Solving: Some AI can tackle specific problems like mathematical equations.

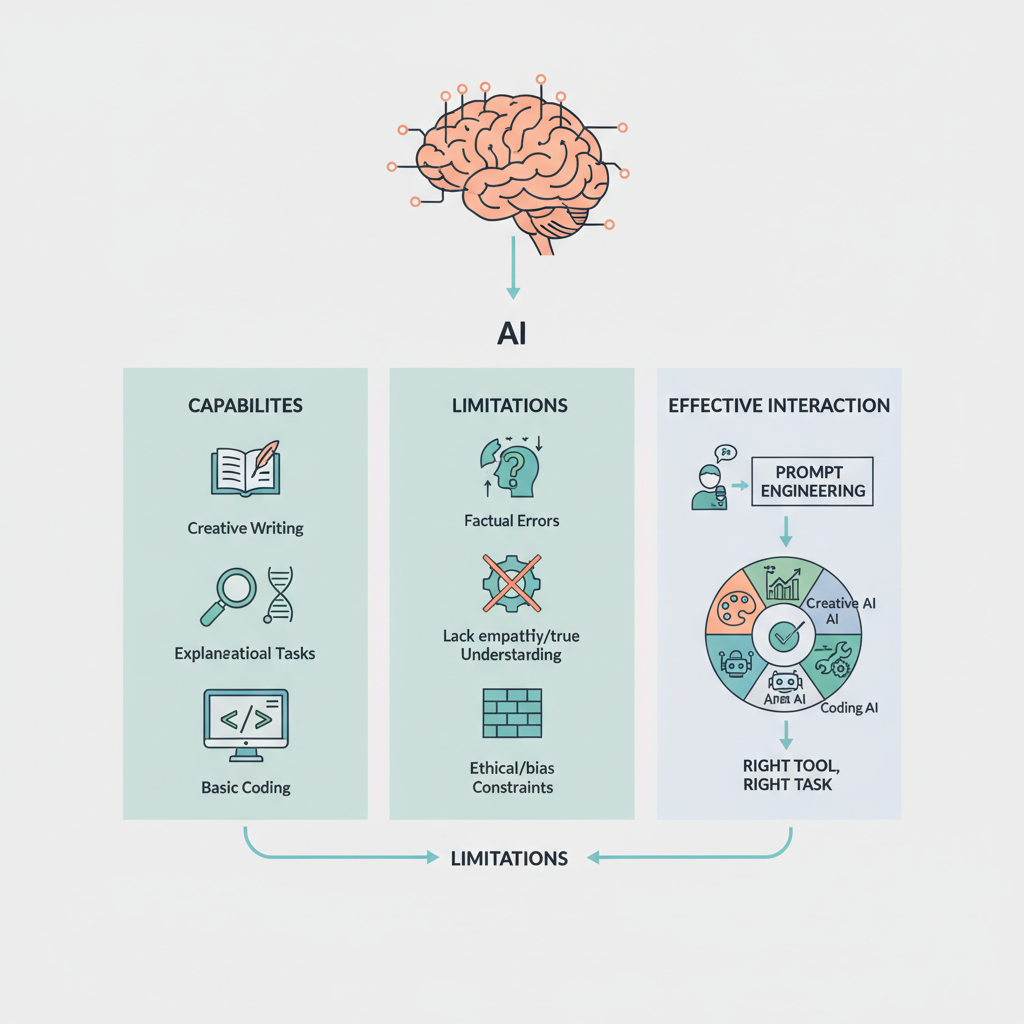

Critical Limitations of AI Tools

Despite their impressive abilities, AI tools are far from perfect and come with significant limitations that users must understand and account for. These limitations are central to the lesson's emphasis on critical evaluation:

- Hallucinations: AI can produce confident but entirely incorrect or fabricated answers. This phenomenon, known as "hallucination," can involve inventing facts, misstating historical events, or citing non-existent sources. Users must always verify AI-generated information.

- Bias: AI models learn from the data they are trained on. If this data contains societal biases, stereotypes, or unbalanced perspectives, the AI will inevitably reflect and potentially perpetuate these biases in its outputs. This raises serious concerns about fairness and equitable representation.

- Outdated Information: Many AI tools are limited by the recency of their training data. Unless explicitly connected to live web search capabilities, they may not have information about recent events, scientific discoveries, or contemporary developments, leading to outdated responses.

- No Inherent Source Verification: Unlike search engines that link to original sources, LLMs typically do not cite real-time sources unless specifically prompted. Their answers, even if made up, can sound accurate and authoritative, making independent verification crucial.

Prompt Engineering: Guiding the AI

A critical concept in interacting with AI is prompt engineering. This refers to the skill of crafting effective and precise instructions or questions (prompts) to guide the AI in generating the desired response. The quality and specificity of a prompt directly influence the usefulness and accuracy of the AI's output. A vague prompt will likely yield a vague or generic answer, while a well-engineered prompt can unlock more sophisticated and targeted results. Understanding how words shape AI's response empowers users to leverage AI more effectively.

Generalist vs. Specialist AI Tools

The document differentiates between two main categories of AI tools:

- General-Purpose AIs: These are broad-spectrum tools like ChatGPT or Gemini that can handle a wide variety of tasks, from creative writing to answering factual questions. They are versatile but might not always be the most effective for highly specialized tasks.

- Specialized AI Tools: These AIs are designed for specific functions, such as image generation (e.g., Copilot Designer), text refinement (e.g., Grammarly, QuillBot), or solving mathematical problems (e.g., Photomath). They often excel in their niche due to tailored training and algorithms. Comparing these types helps students understand the diverse landscape of AI applications.

Ethical and Societal Implications of AI

A significant theme of the lesson is the critical discussion of AI's broader impact. As AI integrates more deeply into daily life, it raises complex ethical and social questions that demand thoughtful consideration:

- Accuracy & Truth: How do we establish truth when AI can generate convincing misinformation? What are the responsibilities of users in verifying AI-generated content?

- Bias & Fairness: How can we prevent AI from reinforcing existing societal biases, and what measures can be taken to ensure fair and equitable outcomes?

- Academic Integrity: When is using AI an appropriate learning aid, and when does it cross the line into dishonesty or plagiarism in educational settings? Establishing clear policies is vital.

- Future Impact: What are the long-term social, economic, and cultural consequences of widespread AI adoption? How might it change industries, employment, and human interaction?

Digital Literacy and Responsible Technology Use

Underlying all these topics is the overarching goal of fostering digital literacy and responsible technology use. In the age of AI, digital literacy extends beyond simply knowing how to use digital tools; it encompasses the ability to:

- Critically evaluate information, especially AI-generated content.

- Understand the underlying mechanisms and limitations of AI.

- Recognize and mitigate biases.

- Adhere to ethical guidelines regarding AI use.

- Navigate the online world safely and honestly.

The lesson aims to equip students with the critical thinking skills necessary to engage with AI as informed, responsible, and ethical digital citizens. This includes being aware of common student misconceptions (e.g., "AI is always right," "AI is just like Google") and learning strategies to overcome them through direct experience and critical discussion.

For a guided walk-through of the core topics, see the In-depth Study Path.

See also: Timeline of Discoveries, Real-World Applications, Key Terms & Concepts

Glossary of Key Terms

Artificial Intelligence (AI)

Computer systems designed to perform tasks that typically require human intelligence, such as writing, analyzing data, solving problems, or recognizing images, by learning from patterns in data.

Large Language Models (LLMs)

A type of AI tool, like ChatGPT or Gemini, that works by predicting the next word in a sentence based on patterns found in massive datasets of text, generating responses based on its training data.

AI Literacy

The understanding of what AI is, how it works, its capabilities, limitations, and ethical implications, enabling critical and responsible interaction with AI tools.

Prompt Engineering

The skill of crafting effective, clear, and specific instructions or questions (prompts) for AI tools to elicit desired and accurate responses, demonstrating how human words shape AI output.

Hallucination

A limitation of AI where it produces confident but incorrect answers, inventing facts, misstating events, or citing non-existent sources, highlighting the need for fact-checking.

Bias in AI

The reflection of stereotypes or unbalanced perspectives in AI-generated content, stemming from biases present in the AI's training data, which can lead to unfair or skewed outputs.

Misinformation

False or inaccurate information generated by AI, which can appear convincing, underscoring the importance of verifying AI-generated content with reliable sources.

Digital Ethics

The set of principles and questions guiding responsible and morally sound behavior in the digital realm, specifically concerning the use, development, and impact of AI on society, truth, and fairness.

Academic Integrity

The commitment to honest and responsible scholarship, particularly in the context of AI, evaluating when its use is a helpful tool versus when it constitutes plagiarism or dishonesty.

General-Purpose AI

AI tools designed to handle a broad range of tasks across different domains, such as creative writing, explanations, and coding (e.g., ChatGPT, Gemini).

Specialized AI

AI tools designed for specific tasks, excelling in a particular niche like image generation (e.g., Copilot Designer), text refinement (e.g., Grammarly, QuillBot), or solving math problems (e.g., Photomath).

Fact-Checking

The process of verifying the accuracy of information, especially crucial for AI-generated content, by comparing it against reliable and independent sources due to AI's potential for inaccuracies.

See also: Summary & Key Points

Timeline of Discoveries

1936: Alan Turing invents the theoretical "a-machine," later known as the Turing Machine, an abstract model of computation, laying a foundational concept for modern computing.

- By: Alan Turing

- Source

1943: Warren McCulloch and Walter Pitts publish "A Logical Calculus of the Ideas Immanent in Nervous Activity," introducing the concept of artificial neural networks, which are fundamental to modern AI.

- By: Warren S. McCulloch and Walter Pitts

- Source

1950: Alan Turing proposes "The Imitation Game," now famously known as the Turing Test, a criterion for determining whether a machine can exhibit intelligent behavior equivalent to that of a human.

- By: Alan Turing

- Source

1955: Arthur Samuel develops a checkers-playing program that can learn from experience, marking an early milestone in machine learning.

- By: Arthur Samuel

- Source

1955: John McCarthy coins the term "Artificial Intelligence" in a proposal for the Dartmouth Summer Research Project on Artificial Intelligence, which would officially establish AI as a field of study in 1956.

- By: John McCarthy

- Source

1957: Frank Rosenblatt develops the Perceptron, an early artificial neural network designed for pattern recognition, initially simulated on an IBM computer.

- By: Frank Rosenblatt

- Source

1970: Seppo Linnainmaa publishes the modern version of the backpropagation algorithm, a crucial method for training artificial neural networks.

- By: Seppo Linnainmaa

- Source

1997: IBM's Deep Blue computer defeats reigning world chess champion Garry Kasparov, a significant achievement in computer chess.

- By: IBM (Deep Blue team)

- Source

2012: AlexNet, a convolutional neural network, wins the ImageNet Large Scale Visual Recognition Challenge, greatly advancing image recognition and sparking the deep learning revolution.

- By: Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton

- Source

2016: Google DeepMind's AlphaGo program defeats world champion Go player Lee Sedol, marking a major milestone in AI's ability to master complex strategy games.

- By: DeepMind (Demis Hassabis, David Silver, and team)

- Source

2017: Google researchers introduce the Transformer architecture, a novel neural network architecture that becomes foundational for Large Language Models (LLMs) and revolutionizes natural language processing.

- By: Ashish Vaswani et al. (Google Brain team)

- Source

2022-11-30: OpenAI releases ChatGPT, a large language model chatbot, to the public, which significantly increases public awareness and engagement with generative AI.

- By: OpenAI

- Source

See also: Detailed Study Guide

Real-World Applications

Technology

AI-powered virtual assistants such as Siri and Google Assistant leverage natural language processing (NLP) to understand voice commands, answer questions, set reminders, and control smart home devices, making daily interactions more intuitive and efficient.

Technology

Image recognition and computer vision AI applications are used for tasks like facial recognition in smartphones, object detection in smart surveillance systems, and visual search tools like Google Lens to identify real-world objects from images.

Technology

AI algorithms play a crucial role in cybersecurity and fraud detection by analyzing network traffic and transaction patterns in real-time to identify anomalies, prevent cyberattacks, and flag fraudulent financial activities, enhancing security for individuals and businesses.

Medicine

AI systems assist in medical diagnostics and imaging analysis by rapidly scanning X-rays, MRIs, and CT scans to detect early signs of diseases like cancer, heart conditions, and neurological disorders, often with greater accuracy and speed than human interpretation.

Medicine

AI enables personalized treatment plans and accelerates drug discovery by analyzing vast datasets of genetic, clinical, and lifestyle information. This helps create tailored therapies for patients and quickly identify potential drug candidates for various diseases.

Engineering

In engineering, AI is used for design optimization and generative design, allowing engineers to simulate and explore thousands of design configurations. This accelerates development cycles, optimizes material use, and improves product performance and sustainability.

Engineering

Predictive maintenance, powered by AI, analyzes real-time sensor data from machinery to forecast potential equipment failures. This enables proactive maintenance, minimizes costly downtime, and extends the operational lifespan of critical assets across industries.

Daily Life

Streaming services like Netflix and Spotify utilize AI-driven recommendation engines to analyze user viewing and listening habits, curating personalized content suggestions to enhance user engagement and satisfaction.

Daily Life

Navigation and traffic management applications, such as Google Maps and Waze, employ AI to process real-time traffic data, predict congestion, and suggest optimal routes and estimated arrival times, making daily commutes smoother and more efficient.

Education

AI-driven personalized learning platforms analyze student performance and engagement to adapt content, create customized learning paths, and provide immediate, tailored feedback, fostering a more effective and individualized educational experience.

Education

AI tools assist with automated grading and assessment, streamlining the evaluation process for educators by providing detailed feedback on assignments and quizzes, saving time and ensuring consistency in student performance assessment.

See also: Detailed Study Guide

Key Terms & Concepts

- Artificial Intelligence (AI)

- Scientific Concept of AI

- Definition: Computer systems performing tasks requiring human intelligence

- Large Language Models (LLMs)

- Examples: ChatGPT, Gemini, Claude, Microsoft Copilot

- Mechanism: Predicting next word based on patterns in massive datasets

- Not search engines: Generate responses, don't look up answers

- Capabilities of AI Tools

- Creative writing

- Explanatory science

- Basic coding

- Image generation

- Text refinement (e.g., Grammarly, QuillBot)

- Solving math problems (e.g., Photomath)

- Limitations of AI Tools

- Hallucinations

- Confident but incorrect answers

- Inventing facts/sources

- Bias

- Reflects biases in training data

- Stereotypes or unbalanced perspectives

- Outdated Information

- Limited by data cutoff date

- Not connected to live web search

- No Source Verification

- Does not cite real-time sources unless prompted

- AI Literacy

- Understanding AI's capabilities and limitations

- Critical evaluation of AI outputs

- Responsible technology use

- Prompt Engineering

- Crafting effective prompts for AI

- Understanding how words shape AI's response

- Importance of clear, specific prompts

- Digital Ethics & Societal Impact of AI

- Accuracy & Truth

- Verifying information from AI (fact-checking)

- Dangers of misinformation (false AI-generated content)

- Bias & Fairness

- Potential for reinforcing stereotypes

- Unfair patterns from training data

- Academic Integrity

- When AI use is helpful vs. dishonest

- Responsibility for AI errors in academic work

- Future of AI

- Bigger picture impact on society

- Types of AI Tools

- General-Purpose AIs

- Examples: ChatGPT, Gemini, Claude, Microsoft Copilot

- Specialist AIs

- Examples: Copilot Designer (image creation), QuillBot (text refinement), Photomath (math problems)

- Critical Thinking

- Evaluating arguments and specific claims

- Assessing validity of reasoning and sufficiency of evidence

- Identifying false statements and fallacious reasoning

- Comparing and contrasting AI outputs

- Reflecting and sharing opinions respectfully

- Responsible Technology Use

- Digital Citizenship

- Awareness of academic honesty

- Understanding benefits and risks of AI

- Common Student Misconceptions about AI

- AI is always right

- AI is just like Google

- I don't know how to write a good prompt

- All AI tools are the same

- I can't access the tools

- Instructional Strategies & Components

- AI Detective Challenge (hands-on activity)

- Hallucination Test (demonstration of AI errors)

- Ethics Roundtable (facilitated discussion)

- Differentiation

- Extra Support

- English Language Learners (ELLs)

- Early Finishers/Extensions

- Alternatives for inaccessible AI tools

- Pre-generated AI responses

- Pairing students

- Teacher-led demos

- Offline alternatives

- Teacher Preparation

- Review school AI policy

- Test access to AI tools

- Prepare handouts and sample paragraphs

See also: Detailed Study Guide

Mind Map

See also: Document Outline

In-depth Study Path

This is a guided path through the core concepts of the document. Start with the first topic and follow the links at the bottom of each page to proceed.

See also: Detailed Study Guide

Segment 1: Understanding Artificial Intelligence - Fundamentals and Functionality

Understanding Artificial Intelligence: Fundamentals and Functionality

This segment introduces the foundational concepts of Artificial Intelligence (AI) and specifically delves into how modern AI tools, particularly Large Language Models (LLMs), operate. It clarifies what AI is, how it processes information, and provides context for its capabilities and limitations.

What is AI?

Artificial Intelligence (AI) refers to computer systems engineered to perform tasks that typically require human intelligence. These tasks are diverse, encompassing abilities such as writing, analyzing complex data, solving intricate problems, or recognizing images. AI systems achieve this by learning from patterns within vast amounts of data, rather than being explicitly programmed for every single scenario.

The Role of Large Language Models (LLMs)

Many of the AI tools widely discussed today, such as ChatGPT, Gemini, Claude, and Microsoft Copilot, are examples of Large Language Models (LLMs). These sophisticated systems are trained on massive datasets of text and code. Their primary function is to predict the next word in a sequence based on the patterns and relationships they've identified in their training data. This predictive capability allows them to generate human-like text, answer questions, summarize information, and even produce creative content.

AI vs. Search Engines: A Key Distinction

It's crucial to understand that LLMs are not search engines. A search engine retrieves existing information from the internet and provides links to its sources. In contrast, LLMs generate responses based on what they've learned from their training data. They don't 'look up' answers online in real-time. This fundamental difference is vital for critically evaluating AI output, as it impacts the accuracy and verifiability of the information provided by AI.

➡️ Next: Segment 2: Capabilities, Limitations, and Effective Interaction with AI

Segment 2: Capabilities, Limitations, and Effective Interaction with AI

Capabilities, Limitations, and Effective Interaction with AI

Building upon the understanding of AI fundamentals, this segment explores the practical applications of AI tools while critically examining their inherent shortcomings. It also introduces the vital skill of 'prompt engineering' and the importance of selecting the right AI tool for a given task.

Diverse Capabilities of AI Tools

AI tools demonstrate a wide range of capabilities, making them valuable assets across various fields. Students will explore tasks such as:

- Creative Writing: Generating stories, poems, or descriptive paragraphs.

- Explanatory Tasks: Simplifying complex scientific concepts for different audience levels (e.g., explaining fusion to a 6th grader).

- Basic Coding: Writing simple functions or providing annotated code snippets.

- Image Generation: Creating images from textual descriptions using specialized tools.

- Text Refinement: Enhancing grammar, style, and clarity in written content.

- Problem Solving: Assisting with specific analytical or mathematical challenges.

Critical Limitations of AI

Despite their impressive abilities, AI tools have significant limitations that users must be aware of:

- Hallucinations: AI can produce confidently stated but incorrect, fabricated, or nonsensical information. This includes inventing facts, misstating historical events, or citing non-existent sources. The 'Hallucination Test' serves as a practical demonstration of this flaw.

- Bias: AI models learn from their training data. If this data contains societal biases (e.g., stereotypes, underrepresentation), the AI can perpetuate these biases in its outputs, leading to unfair or skewed responses.

- Outdated Information: Many AI tools are limited by the cutoff date of their training data. They may not have access to the latest events, research, or developments unless specifically integrated with real-time web search capabilities.

- Lack of Source Verification: Unlike traditional search engines, LLMs typically do not provide real-time citations for their answers unless explicitly prompted. Their responses can sound authoritative even if entirely made up, necessitating independent verification.

Prompt Engineering: Guiding the AI

Prompt engineering is the art and science of crafting effective and precise instructions or questions for AI models. The quality and specificity of a user's prompt directly impact the relevance, accuracy, and usefulness of the AI's response. Learning to write clear, detailed, and well-structured prompts is crucial for unlocking AI's potential and achieving desired outcomes. Experimentation and refinement are key to mastering this skill.

Generalist vs. Specialist AI Tools

AI tools are not all the same. They can be broadly categorized:

- General-Purpose AIs: Tools like ChatGPT or Gemini are designed to handle a wide array of tasks across various domains. They are versatile but may not always be optimal for highly specific needs.

- Specialist AIs: These tools are developed for particular functions, such as Copilot Designer for image creation, Grammarly or QuillBot for text refinement, or Photomath for solving mathematical problems. They often excel in their niche due to specialized training and algorithms.

Segment 3: AI's Impact on Society - Digital Literacy, Ethics, and Responsibility

AI's Impact on Society: Digital Literacy, Ethics, and Responsibility

This final segment examines the broader implications of AI's integration into society, emphasizing the critical skills needed for responsible engagement. It covers the essential elements of digital literacy in the age of AI and addresses the complex ethical dilemmas that arise.

Digital Literacy in the AI Era

In today's technologically advanced world, digital literacy extends beyond basic computer skills. For AI, it specifically means developing the ability to:

- Critically Evaluate Content: Not accepting AI-generated information at face value, but rather questioning its accuracy, relevance, and potential biases.

- Understand AI Mechanics: Knowing how AI works at a fundamental level, including its pattern-recognition basis and distinction from human intelligence or search engines.

- Recognize Limitations: Being aware of phenomena like hallucinations, inherent biases, outdated information, and the lack of automatic source citation.

- Apply Fact-Checking: Routinely verifying AI-generated data, claims, or arguments using reliable, independent sources.

Ethical Questions and Considerations

The pervasive presence of AI raises profound ethical and social questions that require thoughtful discussion and critical analysis. These include:

- Accuracy & Truth: How do we establish what is true when AI can convincingly generate false or misleading information? What responsibilities do individuals and AI developers have in preventing the spread of misinformation?

- Bias & Fairness: Given that AI can inherit and amplify biases from its training data, how can we ensure that AI systems are fair and do not perpetuate or exacerbate societal inequalities, stereotypes, or discrimination?

- Academic Integrity: When is the use of AI in educational contexts considered a helpful learning tool, and when does it cross the line into dishonesty, plagiarism, or hindering genuine learning? This necessitates clear policies and student understanding of responsible use.

- Responsibility for Errors: If an AI provides an incorrect answer that leads to negative consequences (e.g., in homework, medical advice, or legal consultation), who is ultimately accountable: the AI, the user, or the developer?

- Societal and Future Impact: What are the long-term implications of AI on employment, creativity, human decision-making, privacy, and the overall structure of society? How should we collectively guide its development to maximize benefits while mitigating risks?

Fostering Responsible Technology Use

Ultimately, navigating the world with AI requires a commitment to responsible technology use. This means making informed decisions about when and how to deploy AI, understanding its benefits and risks, upholding academic honesty, and actively engaging in discussions about its ethical implications. By doing so, individuals can become empowered and ethical digital citizens capable of harnessing AI's potential for positive change.

⬅️ Previous: Segment 2

Interactive Quiz

Test your knowledge on the core concepts of AI literacy. Select the best answer for each question and submit to see your score.

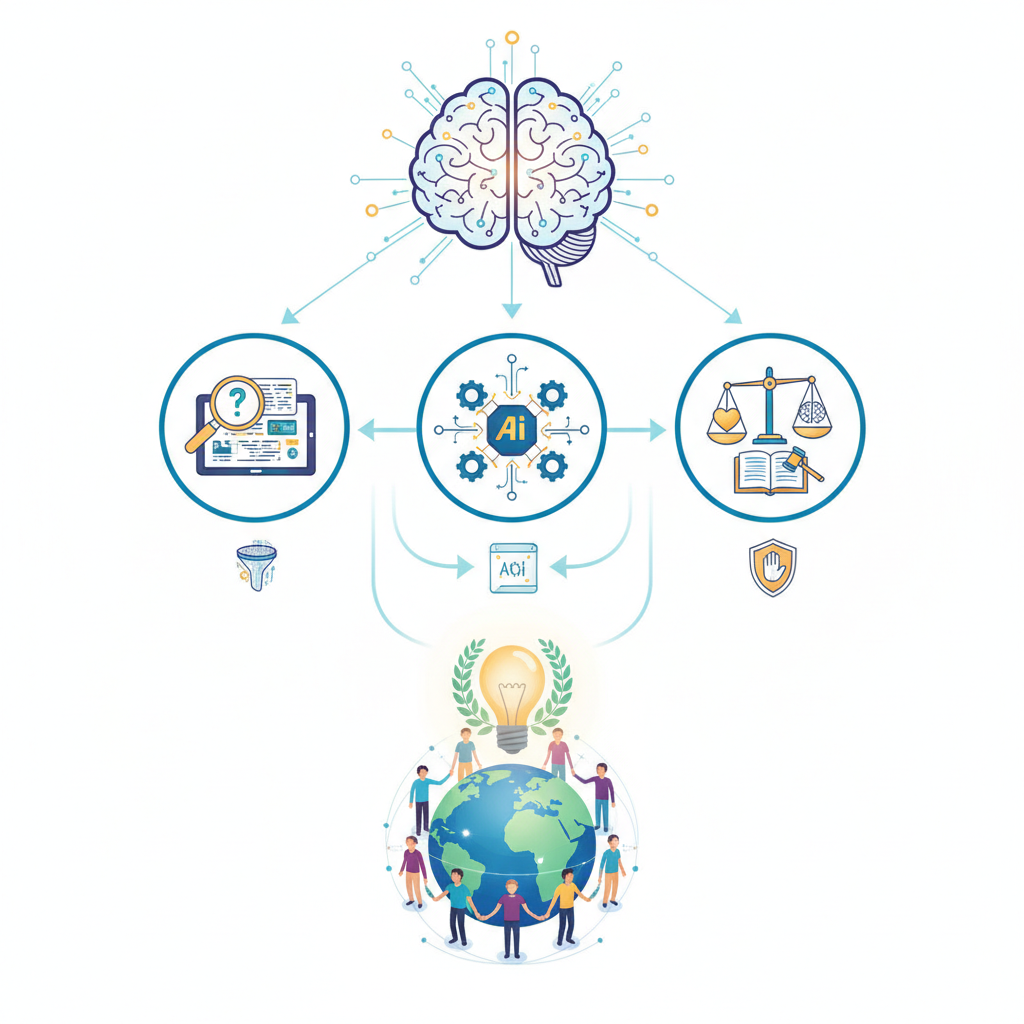

Illustration for Segment 1: Understanding Artificial Intelligence - Fundamentals and Functionality

Illustration for Segment 1: Understanding Artificial Intelligence - Fundamentals and Functionality

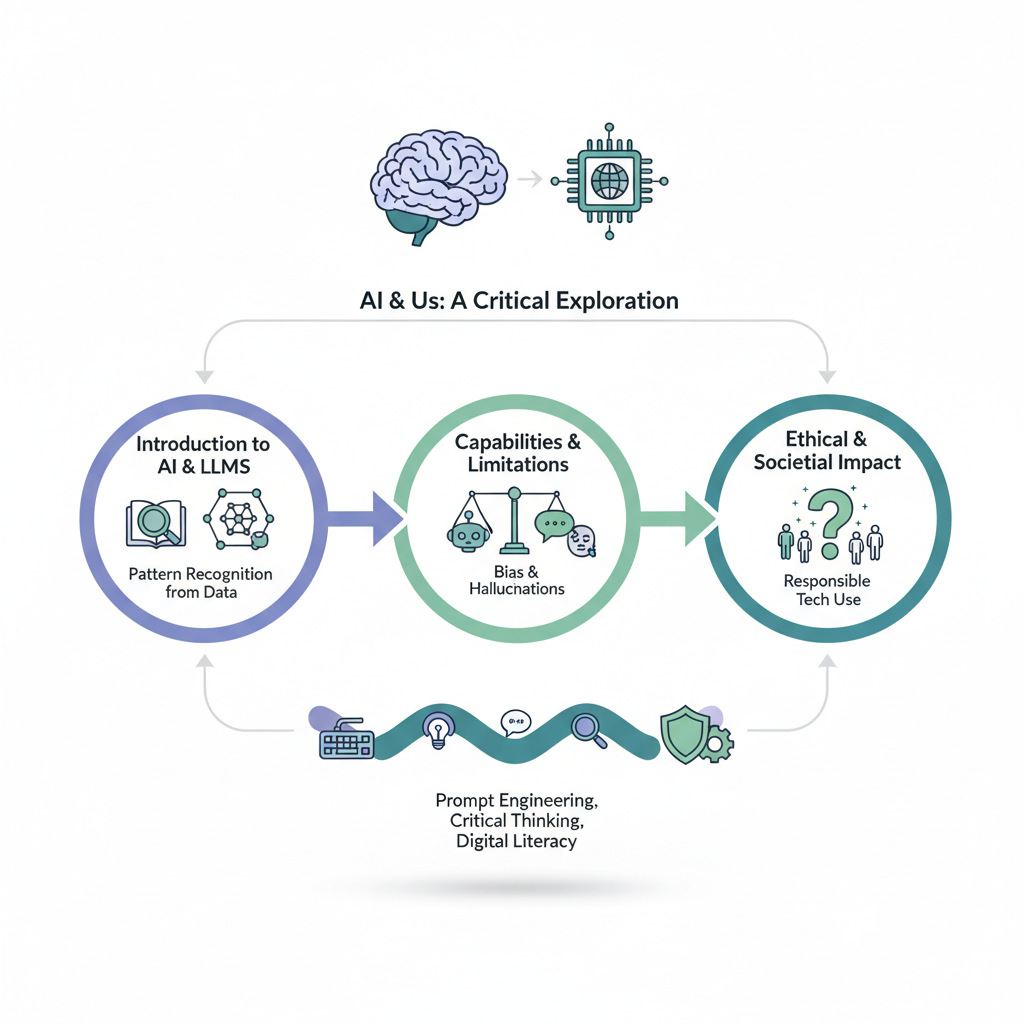

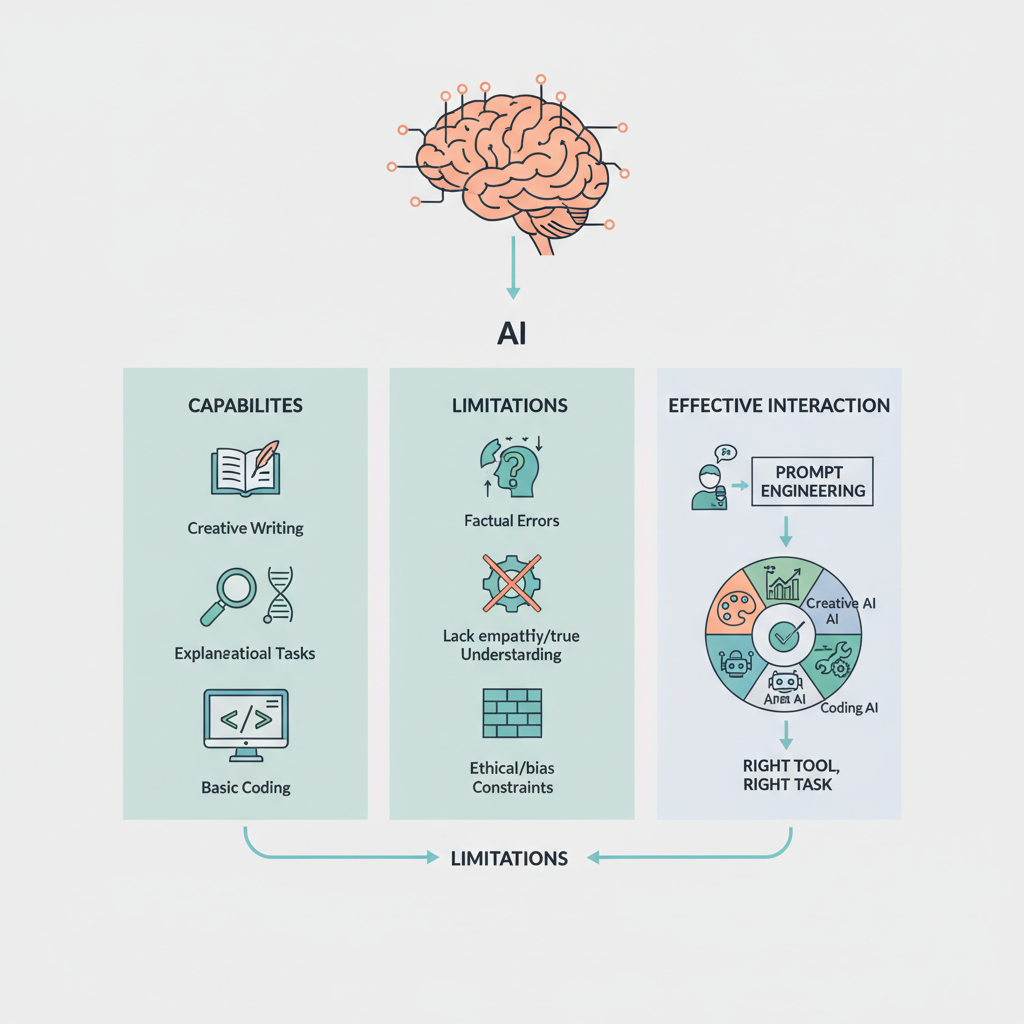

Illustration for Segment 2: Capabilities, Limitations, and Effective Interaction with AI

Illustration for Segment 2: Capabilities, Limitations, and Effective Interaction with AI

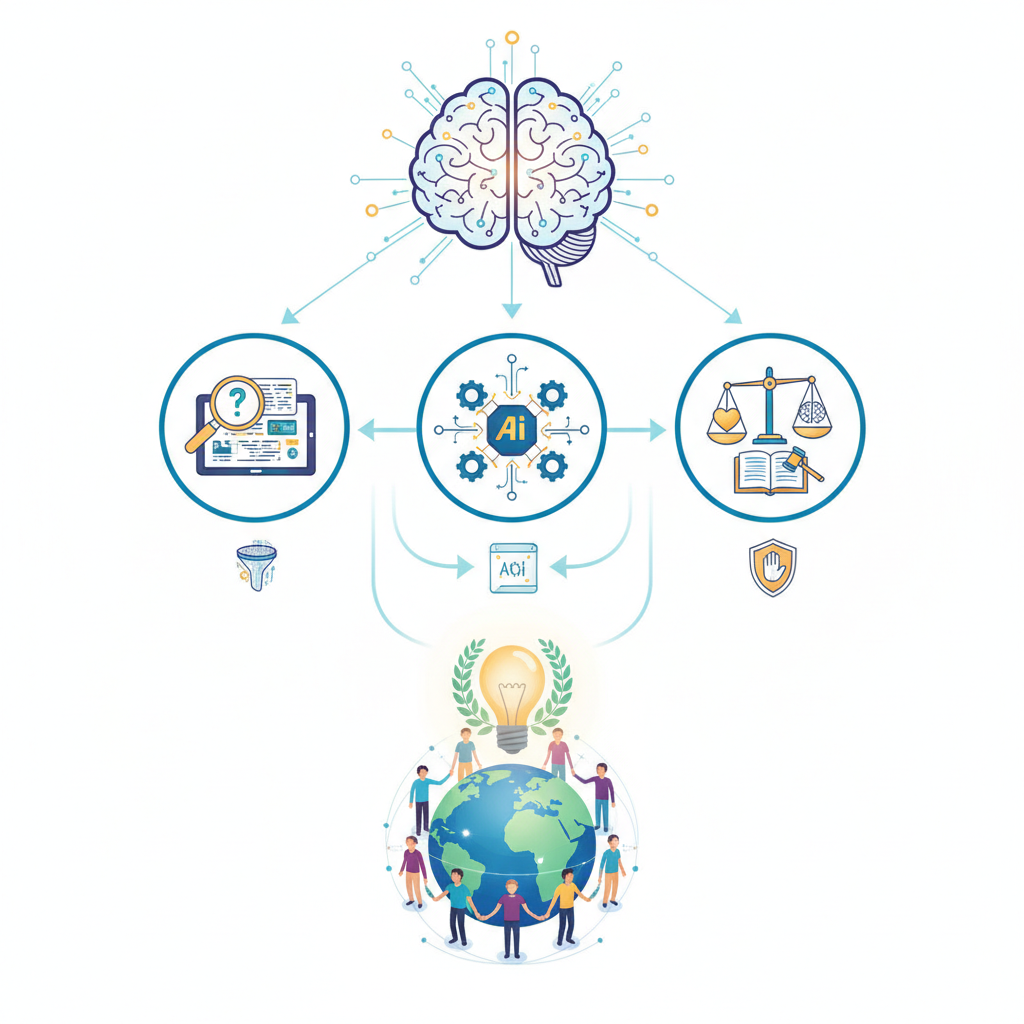

Illustration for Segment 3: AI's Impact on Society - Digital Literacy, Ethics, and Responsibility

Illustration for Segment 3: AI's Impact on Society - Digital Literacy, Ethics, and Responsibility